|

Zhang et al - Colorful Image Colorization - models In short, using a broad and diverse set of objects and scenes dataset of 1.3 Millon photos from ImageNet and applying a Deep Learning algorithm (Feed-Forward CNN), final models were generated and are available at:

The output of the model will be the other components "a" and "b", that once added to the original "L", will return a full colorized photo as shown here:

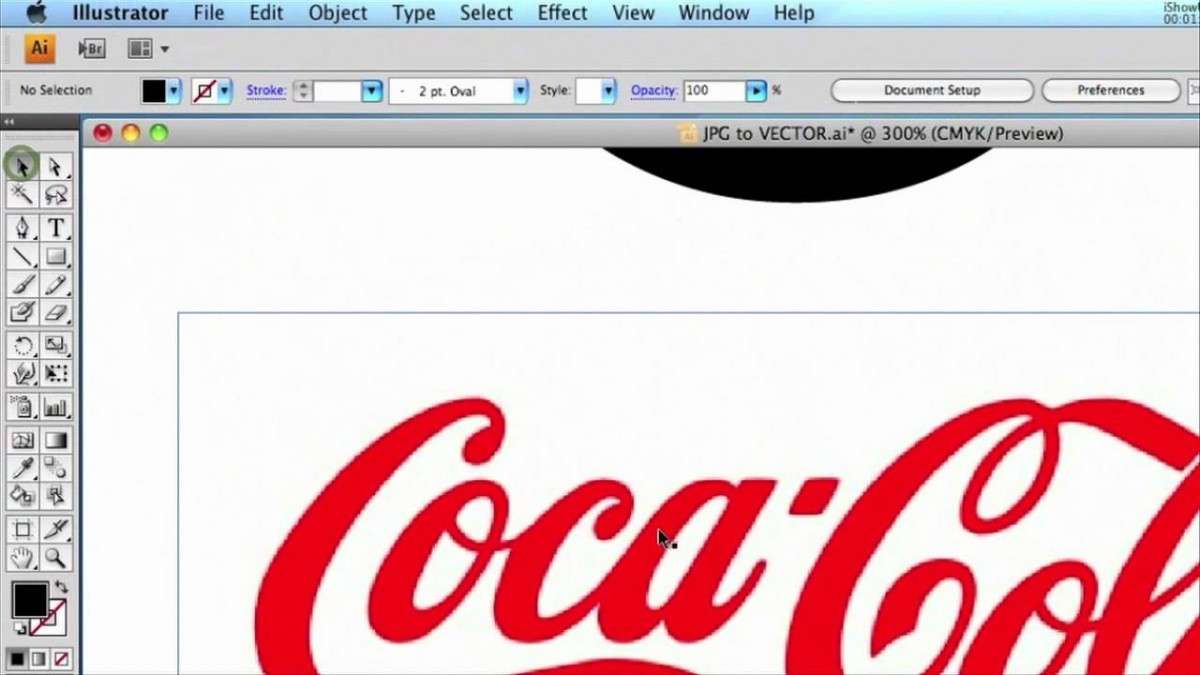

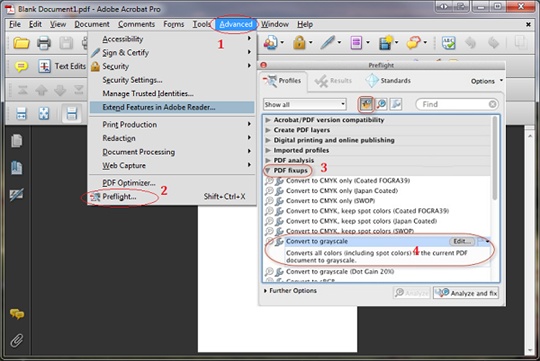

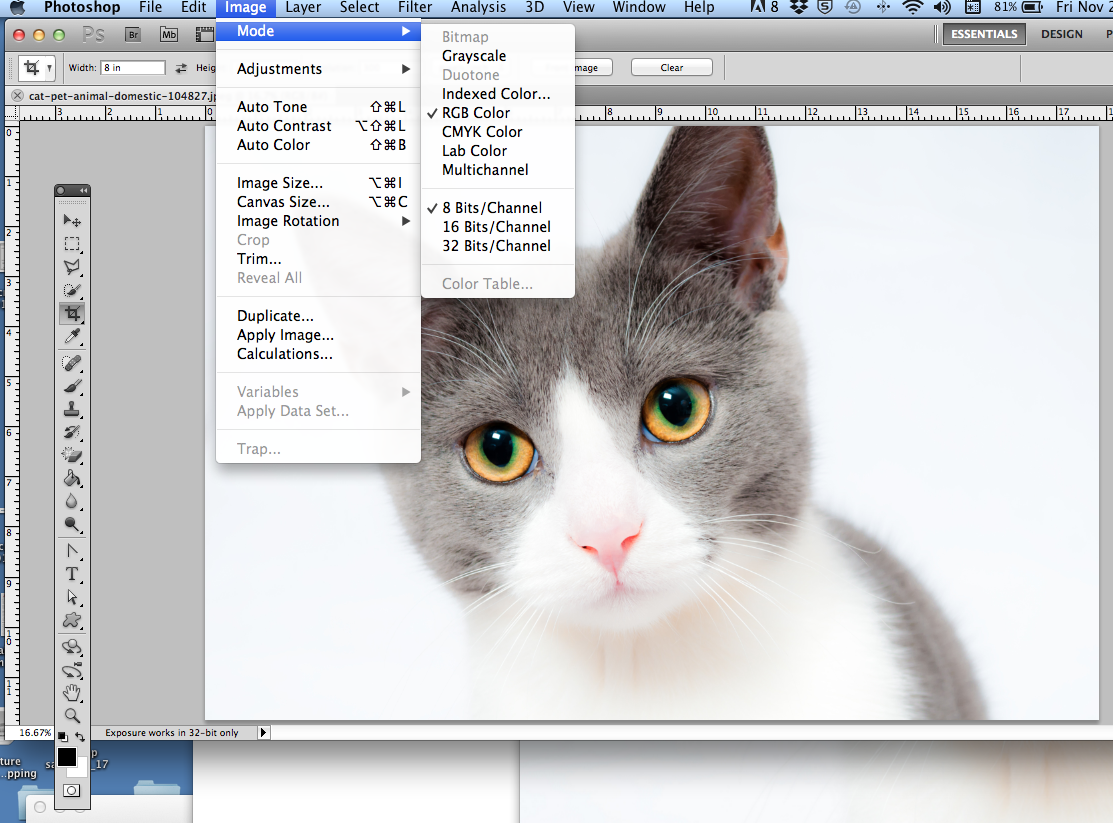

Having the trained model (that is available publically), we can use it to colorize a new B&W foto, where this photo will be the input of the model or the component "L". For simplicity let's split in two: "L" and "a+b" as shown in the block diagram: In other words, millions of color photos were decomposed using Lab model and used as an input feature ("L") and classification labels ("a" and "b"). The L component is exactly what is used as input of the AI model, that was train to estimate the remained components, "a" and "b".Īs commented on the introduction, the Artificial Intelligent (AI) approach is implemented as a feed-forward pass in a CNN (" Convolutional Neural Network") at test time and is trained on over a million color images. It aspires to perceptual uniformity, and its L component closely matches human perception of lightness. Unlike the RGB color model, Lab color is designed to approximate human vision. CIELAB was designed to be perceptually uniform with respect to human color vision, meaning that the same amount of numerical change in these values corresponds to about the same amount of visually perceived change. The color space L * a * b * was created after the theory of opposing colors, where two colors cannot be green and red at the same time, or yellow and blue at the same time. It expresses color as three numerical values, L* for the lightness and a* and b* for the green–red and blue-yellow color components. The CIELAB color space (also known as CIE L*a*b* or sometimes abbreviated as simply "Lab" color space) is a color space defined by the International Commission on Illumination (CIE) in 1976. The name of the model comes from the initials of the three additive primary colors, red, green, and blue.īut, the model that will be used on this project is the "Lab". The RGB color model is an additive color model in which red, green and blue light are added together in various ways to reproduce a broad array of colors. Usually, we are used to coding a color photo using the RGB model. Here a 1932 B&W footage of the city of Rio de Janeiro, Brazil: The same technics could be applied to old videos.

Here a photo shoot on 1906, showing one of the first tests with Santos Dumont's plane "14-bis" in Paris:Īnd its colorized version using the models developed using these AI technics: The Artificial Intelligent (AI) approach is implemented as a feed-forward pass in a CNN (" Convolutional Neural Network") at test time and is trained on over a million color images. As explained in the original paper, the authors, embraced the underlying uncertainty of the problem by posing it as a classification task using class-rebalancing at training time to increase the diversity of colors in the result. The idea of this tutorial will be to develop a fully automatic approach that will generate realistic colorizations of Black & White (B&W) photos and by extension, videos.

This project is based on a research work developed at the University of California, Berkeley by Richard Zhang, Phillip Isola, and Alexei A.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed